One Rack Is a Cloud

What colocation is, and why most AI founders have never heard of it

We earn commissions when you shop through links on this site — at no extra cost to you. Learn more

Deep thinking on building businesses designed to own forever. Not how-to content. Decision logs, frameworks, and pattern recognition.

What colocation is, and why most AI founders have never heard of it

A week ago, I published AI Real Estate. The framing was simple: the AI you use today is rented — like an apartment. There's a ladder above it. Most people don't know the ladder exists. The response was the part I didn't expect. Dozens of people messaged me with versions of the same question. I read it. I get it. I want out. Where do I actually start? Some were lawyers. Some were founders. Some were accountants and consultant

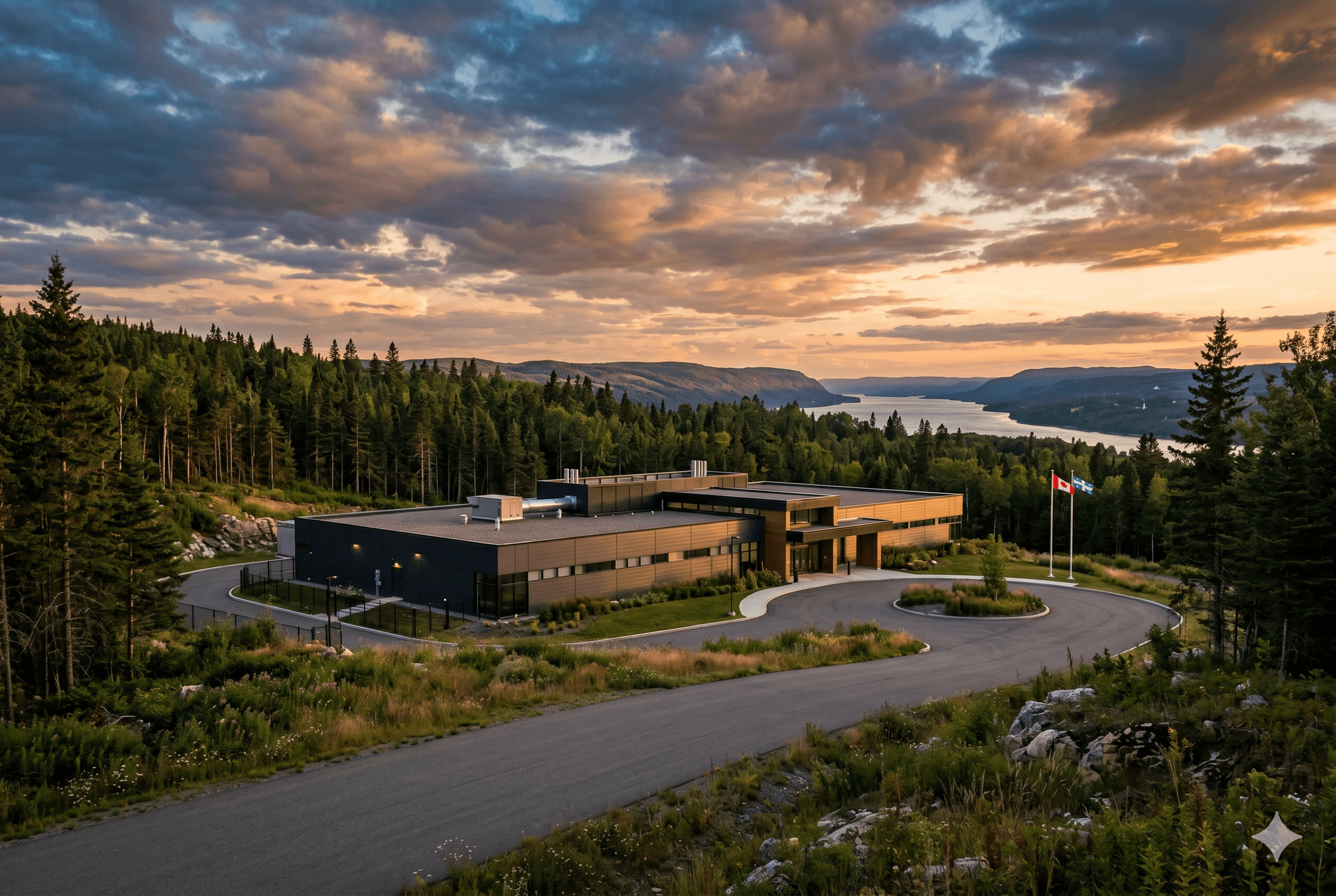

I sat down with a Canadian university last week. They were trying to articulate to industry partners what their compute offering would be. They knew "sovereign" was the right word. They couldn't define it for a buyer. They couldn't tell me what a partner would actually use it for that they couldn't already do on AWS in Montreal. That's not the university's failure. The industry calls three different things "cloud" and lets two

Three weeks ago I wrote a post called GPU Cloud Shopping in Canada: What's Actually Available. The short version: I checked every major cloud provider with a Canadian data center, trying to rent a current-generation GPU to train AI models in this country. Google Cloud Montreal had chips from 2017. AWS listed the right hardware but wouldn't let me actually run it. OVHcloud's H100s turned out to be in France, not Quebec. DigitalOc

Training an AI model is assumed to cost millions of dollars. It's the single most common misconception in the space, and it's wrong by roughly two orders of magnitude for the activity most people actually want to do. This post is a short, concrete breakdown of what fine-tuning actually costs in 2026, what it doesn't cost, and where the real spend lives. I'm writing it now because 'how much does this cost' is the first question

Honest note up front: I have not yet fine-tuned anything with Unsloth. I have not run a single training job. What I did is spend three weeks researching fine-tuning frameworks before writing a line of training code — and at the end of that research, I picked Unsloth and committed to it. This post is about why. I'm writing it now, before I start, for two reasons. First, so that if this decision ages badly I have to own it public

I'm training an AI model. It's going to run on a laptop. Three weeks ago I would have told you I was training a 70-billion-parameter model, the kind of thing that needs a data center to breathe. I'm not. I'm training a 4-billion-parameter model that runs on a Mac Mini. If the smaller one works, a larger companion model may follow. But the 4B is the bet. This is the first post in a series where I'll share what I'm building, why,

What active thesis investing actually looks like — and why I'll publish every bet I make.

Why the index gospel was built for a world that no longer exists.

What two years of quant trading taught me before I shut it down.

Open source AI didn't die. It ran out of sponsors.

AI Real Estate: From Apartment to Mansion A $3,999 Mac Studio runs a 122 billion parameter AI model on your desk. Running the same model on AWS costs roughly $5 an hour. About $3,700 a month if you leave it on. The Mac pays for itself in five weeks. A $3,999 Mac Studio runs a 122B parameter AI model locally. Running that same model on AWS: $5/hour. ~$3,700/month if you leave it on. The Mac pays for itself in five weeks. I

Showing 12 of 235 essays