Three weeks ago I wrote a post called GPU Cloud Shopping in Canada: What's Actually Available.

The short version: I checked every major cloud provider with a Canadian data center, trying to rent a current-generation GPU to train AI models in this country.

Google Cloud Montreal had chips from 2017. AWS listed the right hardware but wouldn't let me actually run it. OVHcloud's H100s turned out to be in France, not Quebec. DigitalOcean had the right gear in Toronto, but they were sold out.

Conclusion: Canada has spent billions of dollars telling the world we want to be an AI country, and you couldn't rent a current-generation GPU here through any major provider.

That post traveled further than anything I've ever written.

It got read by other founders hitting the same walls.

It got read inside government.

It got read by the cloud providers themselves.

Then things started moving.

This is what changed in three weeks.

Same providers, same order as the original post.

I'm not endorsing anyone — every company in this post is on the same journey we are, including the ones moving fastest.

Google Cloud: from chips that predate the iPhone X to a real allocation

Three weeks ago, the best GPU you could rent in Google Cloud's Montreal region was a chip from 2017.

I wrote that and I think it embarrassed people, because the response was immediate and completely different from what I expected.

Within days of the post going up, Google reached out.

Not a templated "thanks for your feedback" email — actual humans.

They gave us a real allocation of current-generation GPUs in a Canadian region.

They assigned us a dedicated engineer. They assigned us a startup advisor.

Things that had been blocked for weeks got unblocked in minutes.

I want to be honest about what this means and what it doesn't.

The public pricing page in Montreal still looks roughly the way it did three weeks ago. If you went and checked today, you'd see roughly the same picture I described in the original post.

What changed for me was the path from "Canadian founder with a workload" to "running on Canadian compute" — and that path now exists in a way it didn't before.

But I had a public post that hit five million views. Most founders don't.

The question I keep coming back to is whether the same response would happen for someone who didn't write the post that embarrassed everyone.

Either way: on its own terms, the response was excellent. Credit where it's due.

AWS: hit a wall, working through it

Three weeks ago, AWS was the most frustrating one for me.

The hardware exists in their catalog.

They show H100s and A100s in their Canadian region.

But when I actually tried to launch one, every single GPU on my account had a quota of zero.

That hasn't fully resolved yet. We've put in the requests. Conversations are happening. No green light yet on the hardware that matters most.

I don't want to make this sound worse than it is.

This is just how big enterprise procurement works — there are forms, there are approvals, there are people who have to sign off, and it takes time.

AWS is a serious company and I expect we'll get there.

It's just slower than I'd like, and I'd rather tell you "still in progress" than pretend it's solved.

Telus Sovereign AI Factory: the part of the story I missed

This is the one I want to flag clearly, because I owe readers an honest correction.

When I wrote the original post, I checked the providers I knew about: Google, AWS, OVHcloud, DigitalOcean, ISAIC.

I didn't include Telus. I didn't include them because I didn't know they existed in this market.

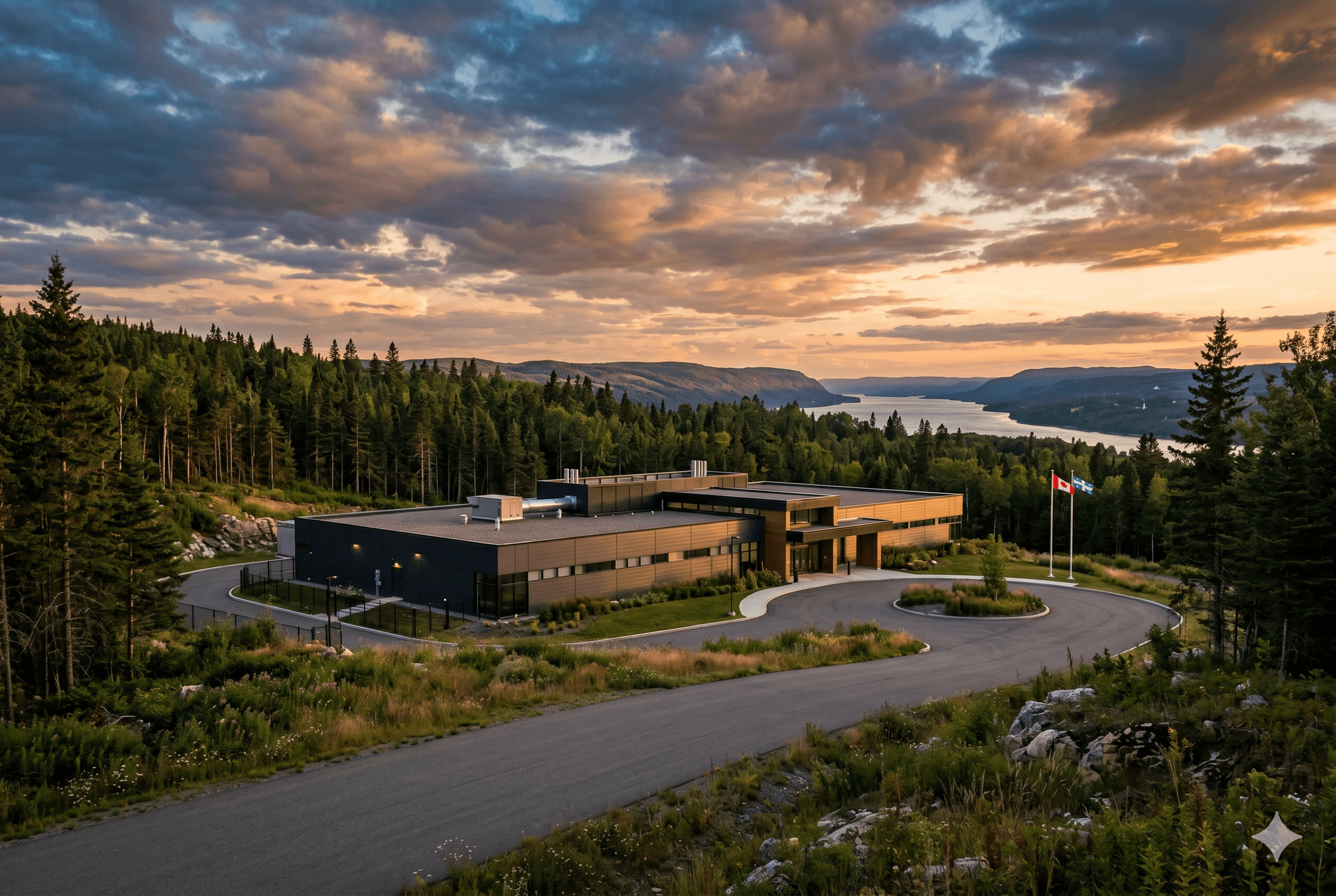

It turns out they very much do. Telus opened a sovereign AI data centre in Rimouski, Quebec in September 2025 — seven months before I wrote my post.

They have current-generation NVIDIA H200s. The facility is built, owned, and operated by a Canadian company.

Because Telus is Canadian, the facility isn't subject to the US CLOUD Act, which is one of the main reasons sovereignty is supposed to matter in the first place.

They were designed from day one for exactly the gap I was writing about.

And I missed them entirely.

I think this is actually worth sitting with for a second.

A G7-class sovereign compute facility opened in Quebec in September 2025.

I spent two weeks researching this market before writing the original post.

I'm probably more obsessed with this topic than 99% of Canadian founders. And it didn't surface.

That's not a criticism of Telus — they reached out kindly after the post and have been generous with their time since.

But it does say something about how broken the discovery problem in this space is.

If you're finding this useful, I send essays like this 2-3x per week.

·No spam

If a founder who writes about Canadian sovereign compute for a living can miss a Canadian sovereign compute facility, what chance does a founder building, say, a healthcare AI startup have of finding it while they're trying to ship product?

We're in early discussions with Telus now. I haven't run a real workload there yet, so I can't tell you what it's like to actually use the platform. What I can tell you is that the value proposition — current-generation training hardware, Canadian-owned infrastructure, real sovereignty — is exactly what the original post said didn't exist in this country.

If it delivers on what's on the box, it changes the answer to "can you build sovereign AI in Canada." That's a big deal. I'll write more once I have something real to report.

DigitalOcean: still sold out

Three weeks ago, DigitalOcean was the surprise of the post — a company most people associate with $5 droplets had H100s in Toronto for $2.99 an hour, undercutting everyone.

Three weeks later, they're still sold out.

The follow-up I noted on April 16 — talking to their sales team about reserved capacity through their 12-month plan — is still on the to-do list.

Honestly, I haven't pushed it because the other options moved faster and we have what we need for now.

DigitalOcean is still the most interesting story in the original post.

A mid-size cloud provider quietly out-shipping the trillion-dollar guys on Canadian inventory.

The capacity problem is real, but the fact that the inventory exists at all is the point.

The flood I didn't expect: GPU brokers and resellers

One thing I didn't see coming: the inbound from H100 resellers, brokers, and small GPU clouds.

More than fifty emails since the post went up. I've ignored almost all of them, and I want to explain why.

It's not that any of them are necessarily bad. Some are probably great.

The problem is that I have no way to evaluate fifty cold emails from companies I've never heard of with any rigor.

The way a tier-three GPU broker can fail you — disappear overnight, oversubscribe their capacity, run out of cash, lose access to their upstream supplier — is different from how a hyperscaler can fail you.

You don't get to find that out the hard way when you have a $3 million training run scheduled.

But the volume itself tells you something.

There is a lot of capital chasing this gap.

Some of it will turn into real infrastructure. Most of it won't.

The next twelve months will sort it out.

Where this leaves us

Three weeks ago: zero confirmed paths to current-generation GPUs in Canadian data centers.

Today: one resolved (Google Cloud), one in progress (AWS), one new option I didn't know about (Telus), one still working (DigitalOcean), and a flood of smaller players I can't responsibly evaluate.

That's a real change. I want to give credit where it's due — the providers who responded moved fast, took the criticism seriously, and showed up with actual capacity. That's not nothing. That's a lot.

But I want to be honest about what this means.

The system didn't fix itself in three weeks.

What happened is that one founder posted something publicly, that post traveled, providers responded to that specific founder, and the gap got closed for that one founder. That's a different thing.

The next founder who walks into this market on April 25 walks into roughly the same landscape I walked into on April 6.

The same empty pricing pages. The same quota requests sitting in portals. The Telus option, now, if they happen to find it.

The discovery problem hasn't been solved. The inventory problem hasn't been solved.

What's been solved is my problem, and that happened because I have a platform that most Canadian founders don't have.

I don't have a clean answer for what to do about that.

But I think the conversation has to keep happening publicly, because the version of this where founders quietly route around the gap by sending their workloads to Virginia is the version where Canada loses the AI sovereignty argument permanently — and nobody notices until it's too late.

So I'll keep writing. Telus update when I have one. AWS update when it lands. Whatever the next thing is when I find it.

The gap is closing. Slowly. Unevenly. With a lot of cold calls and follow-up emails in between.

But it's closing.

Follow-up to GPU Cloud Shopping in Canada: What's Actually Available (April 7, 2026).

Status reflects what I know as of April 24, 2026. Not an endorsement of any provider — every company in this post is on the same journey we are.

George Pu is the founder of Alpine Pacific Trading, building sovereign AI on Canadian infrastructure.